Introduction

This document represents a personal reflection on the practical relationship between artificial intelligence and architecture. It is not based on any formal academic research or external sources, but rather on my own experiences and observations over time. My intention is to use this as a living document, updating it as AI continues to evolve and influence the field of architecture. By revisiting and expanding upon these thoughts, I aim to better understand how AI is shaping the profession and its future applications in design and architecture.

UPDATE: April. 2026

The Evolving Relationship Between Artificial Intelligence and Architecture

Over the past two years, the most significant change has not only been technological progress, but also the way artificial intelligence has become widely adopted. In the early phase of generative AI, the use of these tools was largely limited to highly technical users and enthusiasts. Even tools such as ChatGPT were far less commonly used, and many people did not fully understand the difference between basic access and more advanced subscription based capabilities.

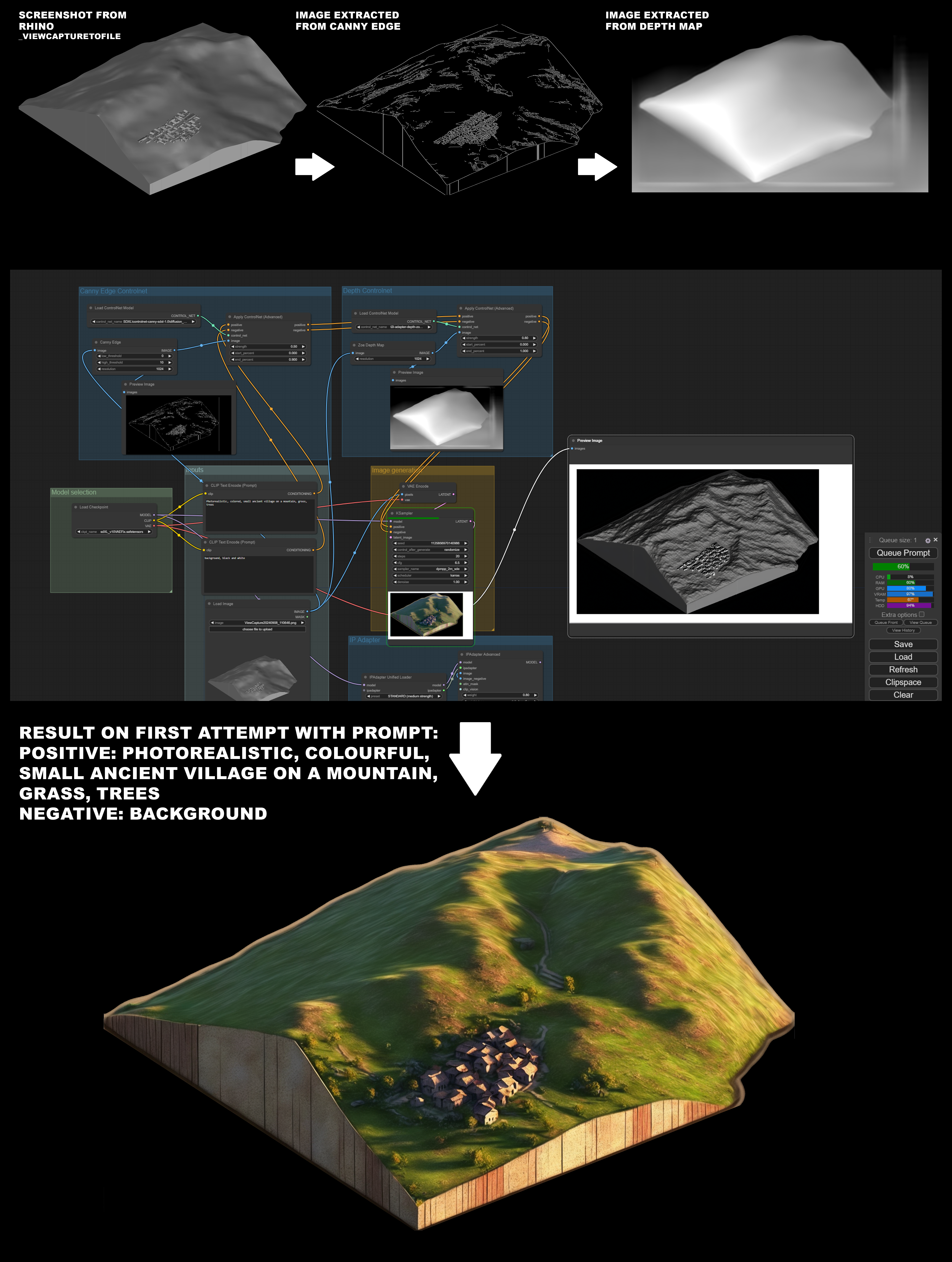

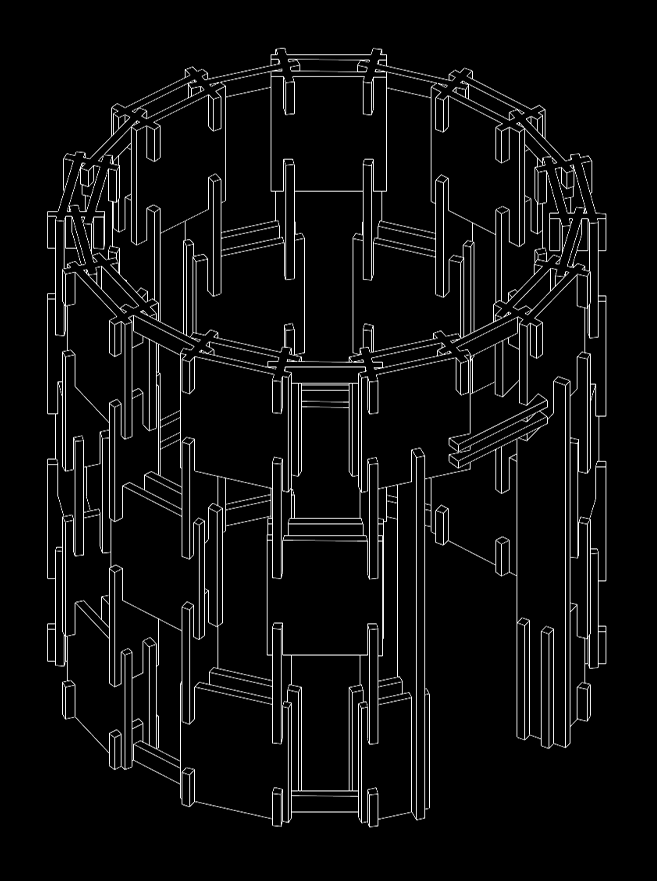

At the time, obtaining usable results from generative systems such as Midjourney, DALL·E or local Stable Diffusion setups often required extensive experimentation. Achieving coherent images frequently meant relying on techniques such as masking, depth maps, ControlNet, and many iterations. The process could be powerful, but it was also slow and often frustrating.

A major shift occurred when AI systems became easier to use and more accessible to a broader audience. Competition between major technology companies accelerated development rapidly. Platforms such as ChatGPT, Gemini, Claude and other systems began improving both usability and reliability. This transformation significantly reduced the barrier to entry and allowed these tools to become part of everyday workflows.

One particularly notable milestone was the arrival of new image generation systems capable of producing highly coherent results with far less manual intervention. Google’s Nano Banana model, for example, demonstrated a remarkable ability to maintain visual coherence, manage complex scenes and produce highly photorealistic outputs across different formats. Compared to earlier systems, the number of iterations required to reach a convincing result decreased significantly.

At the same time, I continued exploring more experimental and cutting edge workflows through local tools such as ComfyUI. These environments allow much deeper control over the generation process and provide access to rapidly evolving models released through open communities such as Hugging Face and GitHub. Over time, these models have reached levels of coherence and visual quality that approach the results of large commercial systems, while maintaining the flexibility of local workflows.

Another development that has become extremely valuable in practice is the evolution of AI assisted research and document analysis. Features such as deep search and advanced document synthesis now allow complex information to be analyzed, summarized and verified with a level of speed and clarity that was not possible only a few years ago. This has proven particularly useful when studying technical material, competition briefs or large documents.

In architectural practice, these tools are now integrated across multiple phases of work. They can assist during preliminary study, concept exploration, testing of materials and lighting atmospheres, refinement of renderings, and final visual editing. They also support many parallel tasks such as writing emails, preparing reports, researching competitions, and organizing information more efficiently.

Some technical limitations remain. Precise geometric control is still a challenge in many generative systems, especially when dealing with large image resolutions or technically accurate representations. Techniques such as tiling can help produce higher resolution outputs, but they often require complex workflows and additional computational effort. Another important limitation concerns information reliability: if research results are not carefully verified, AI systems may adapt their answers to conversational context rather than providing fully objective information.

Despite these limitations, certain areas have progressed remarkably. Localized image editing has become extremely effective, allowing specific parts of an image to be modified while preserving the rest of the composition. AI assisted writing, translation and text refinement have also reached a level of reliability that makes them extremely useful in daily professional tasks. In addition, the generation of audio for presentations, multilingual narration, and structured report writing has become surprisingly coherent and practical.

Looking back, the most important transformation between 2024 and 2026 is not simply the improvement of models. The real shift has been the transition from niche experimentation to widespread everyday use. AI tools are now accessible to a much larger number of professionals, often at relatively low cost compared to their potential impact. In many cases, they reduce the cognitive effort required for routine tasks and allow more time to be dedicated to critical thinking and design decisions.

UPDATE: Sept. 2024

The Practical Relationship Between Artificial Intelligence and Architecture

In 2022, I began exploring the potential of generative artificial intelligence, driven by my curiosity and the desire to enrich my professional practice with new technological tools. From the very first experiments, despite the technical limitations of the time, I saw the significant potential of these systems. Initially, I used MidJourney for generating images from text and ChatGPT for supporting textual content creation. Although both tools were powerful, achieving satisfactory quality required long iterative cycles. Paradoxically, the time involved often exceeded what would have been necessary for manual creation. However, the AI-driven process offered a different and more stimulating experience from a creative standpoint.

A major turning point came with the release of the Stable Diffusion model in 2022, made accessible via the AUTOMATIC1111 (A1111) interface. This tool quickly gained popularity due to its flexibility and the ability to run the model locally on consumer GPUs. For the first time, it was possible to perform complex computations without relying on remote servers, opening new avenues for customization and control, and making these AI systems more widely accessible.

However, I soon realized that the time required to obtain significant results could negatively affect my daily productivity. For this reason, I sought ways to integrate these tools into my workflow in a balanced way, leveraging them to speed up specific tasks without compromising creative control. My early attempts focused on image generation, but initially, the lack of control over the results was a challenge. Often, the generated images were too distant from my intentions in terms of composition, color palette, and forms.

A significant advancement came with the use of extensions such as LoRA and ControlNet, which allowed for more refined control over the models and produced results more aligned with my expectations. ControlNet proved particularly useful in precisely defining the position and structure of elements within images, thus improving the efficiency of the process. Thanks to these tools, I was able to significantly reduce the time spent on image production, making it easier to integrate these technologies into my professional workflow.

Another important development was the introduction of ComfyUI, a platform that allows the use of Stable Diffusion (and other models like Flux) through a node-based system, similar to what is used in powerful software such as Blender, Unreal Engine, and Rhino Grasshopper. The node-based approach enables control over every stage of the creative process, from initial generation to detailed modification. While this increases the complexity of the process slightly, it offers a much higher level of control and allows each step of the pipeline to be visualized, making the workflow more understandable and easier to improve. As in the aforementioned software, the use of nodes provides a granular view of the entire workflow, giving greater freedom in customizing the results and allowing for the combination and optimization of different techniques.

At the same time, collaborating with the online community has been crucial in the evolution of my tools. Since many of these models are open-source, the developer community has played a key role in improving and customizing the technologies. This dynamic, ever-evolving environment has made it possible to quickly resolve issues and has led to constant innovation.

Meanwhile, ChatGPT became a valuable asset for time-consuming tasks such as text revision, quick research, and error checking, streamlining my daily workflow. Thanks to these tools, I was able to dedicate more time to creative and strategic activities, optimizing how I manage my time.

Over the months, the constant evolution of these technologies has led to significant improvements. The models have become more reliable, controllable, and capable of delivering consistent results. This has made AI technologies increasingly usable on a larger scale, with paid usage options further expanding their accessibility to a broader audience.

Artificial Intelligence in Architectural Design

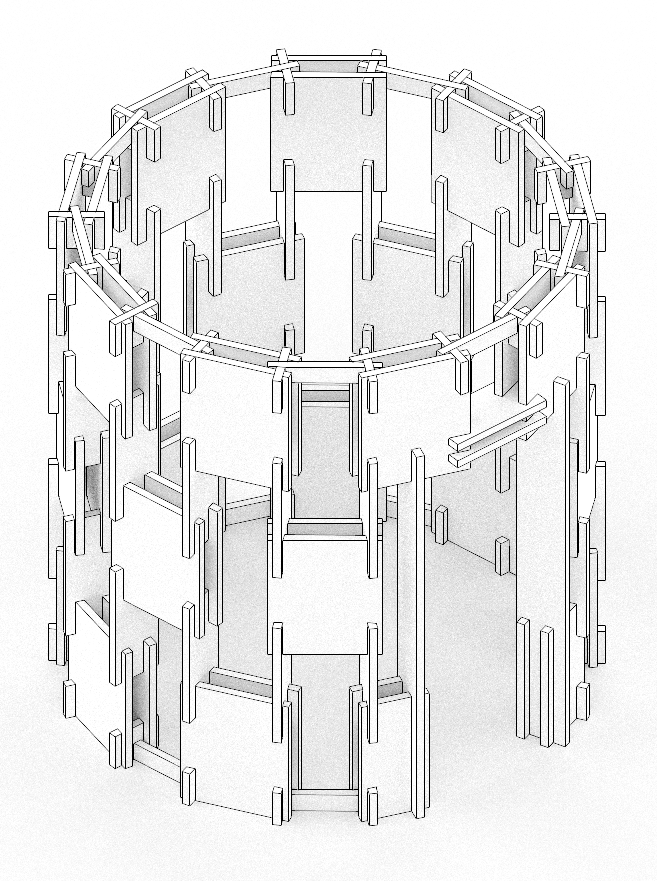

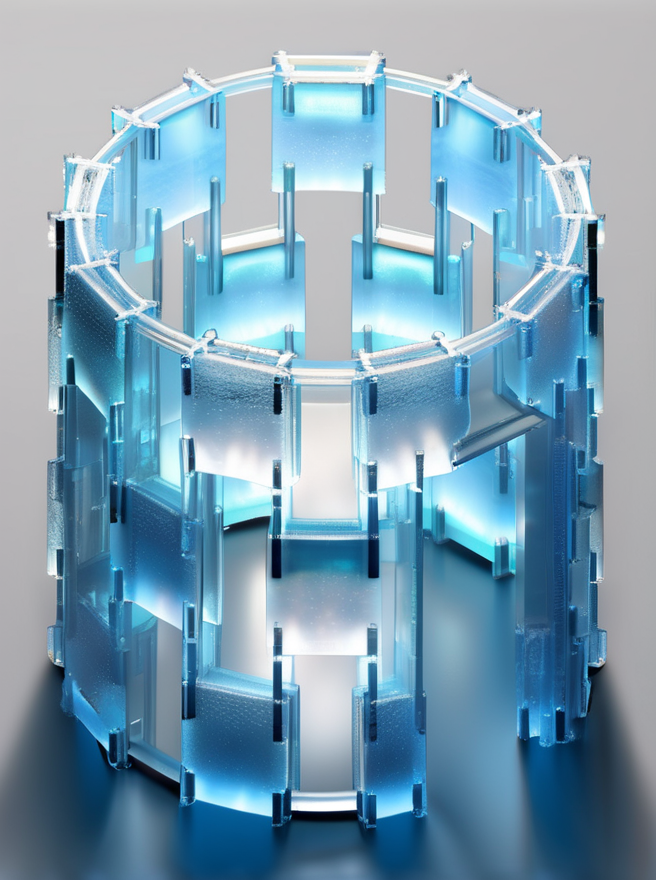

One of the most fascinating aspects for me has been understanding how AI can be concretely integrated into an architect’s creative process. During the early stages of a project, these tools have proven to be crucial, especially in the conceptual phase. Starting from simple sketches or textual descriptions, it is possible to quickly generate images that incorporate textures, environments, and different atmospheres in a short amount of time. This saves hours that would otherwise be spent manually creating initial visualizations of a project, enabling rapid iteration and the ability to explore variations simply and efficiently.

Tools such as inpainting and outpainting have also proven extremely useful for modifying or expanding existing images. These techniques are particularly valuable for refining existing renderings or adding new elements without having to start from scratch. These tools, once reserved for professionals with advanced skills, are now accessible and can be used to improve the efficiency of the creative workflow.

The integration of these systems into the design process does not in any way mean replacing the architect’s creative work. Rather, they amplify the architect’s capabilities, allowing for the exploration of new avenues and improving productivity, leaving more room for conceptual development and the refinement of details.

Final Thoughts and Future Perspectives

Looking to the future, I am convinced that artificial intelligence will never fully replace the work of an architect, but will increasingly act as a powerful ally, expanding their capabilities. AI-based tools allow for the rapid exploration of multiple design solutions, the optimization of production processes, and the enhancement of final results. However, the human touch, intuition, and design sensitivity will always remain irreplaceable.

I imagine a future where the collaboration between humans and these advanced systems will become more and more integrated, with AI serving as an extension of creative thought, enabling experimentation and innovation in ways that are unimaginable today. The key will be maintaining control over the process, using these tools to amplify our abilities, not to replace them. Continuous innovation and learning will be essential to staying competitive in a world where technology becomes increasingly integrated into the creative process.